Blog

Discover insights and updates on our work in building reliable and interpretable AI systems.

Polygenic Prediction: The Problem, the Landscape, and What We're Building

Complex traits are 50–90% heritable, but today's best polygenic models explain only a fraction of that variance. We cover the market, the technical gaps in current methods, and what we're building at Reticular.

John Yang

Co-Founder & CEO

Breaking Open the Black Box: Making Protein Structure Prediction Interpretable

A look inside our new paper on interpretable protein structure prediction using sparse autoencoders and what it means for the future of trustworthy protein AI.

Nithin Parsan

Co-Founder

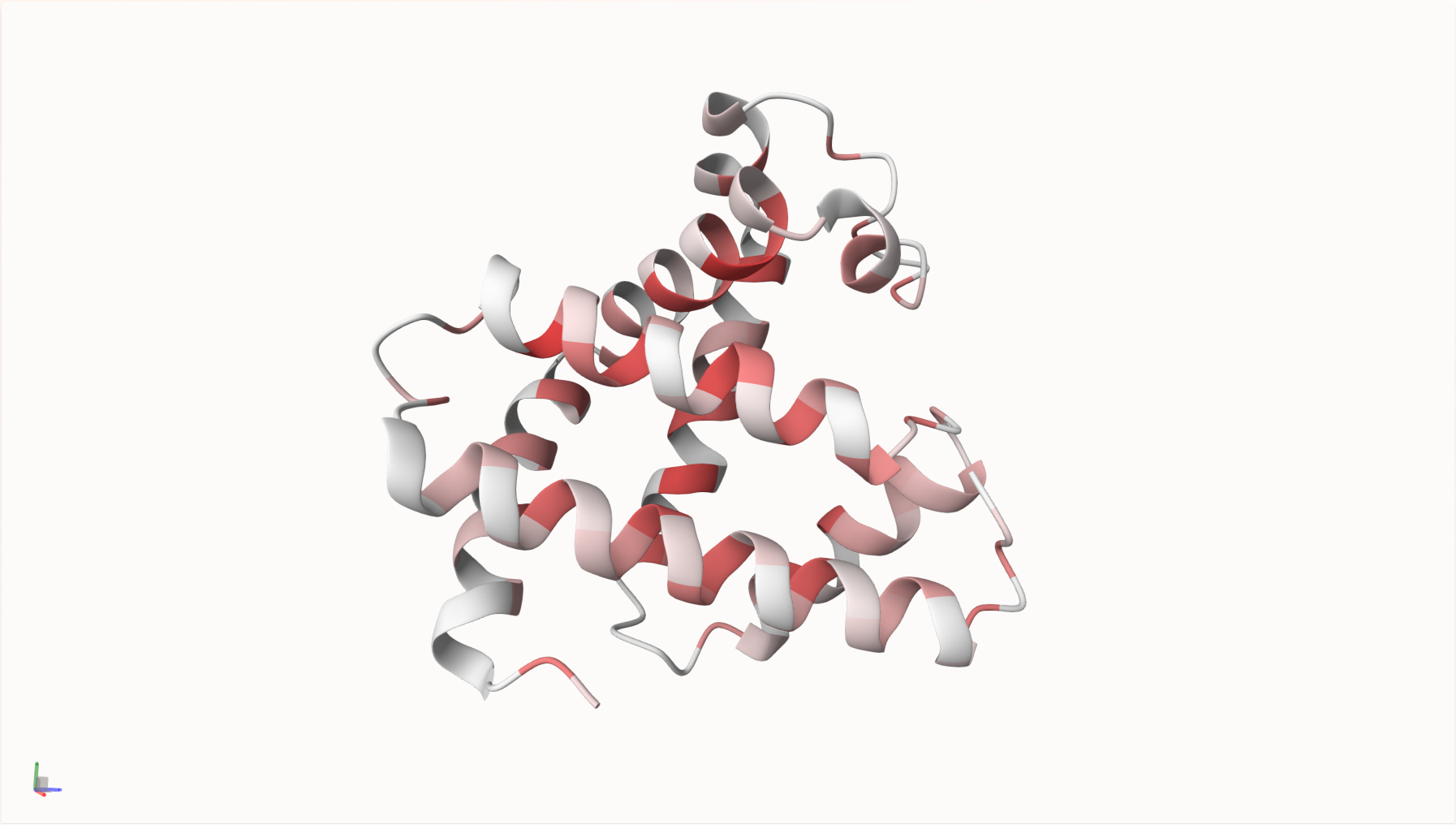

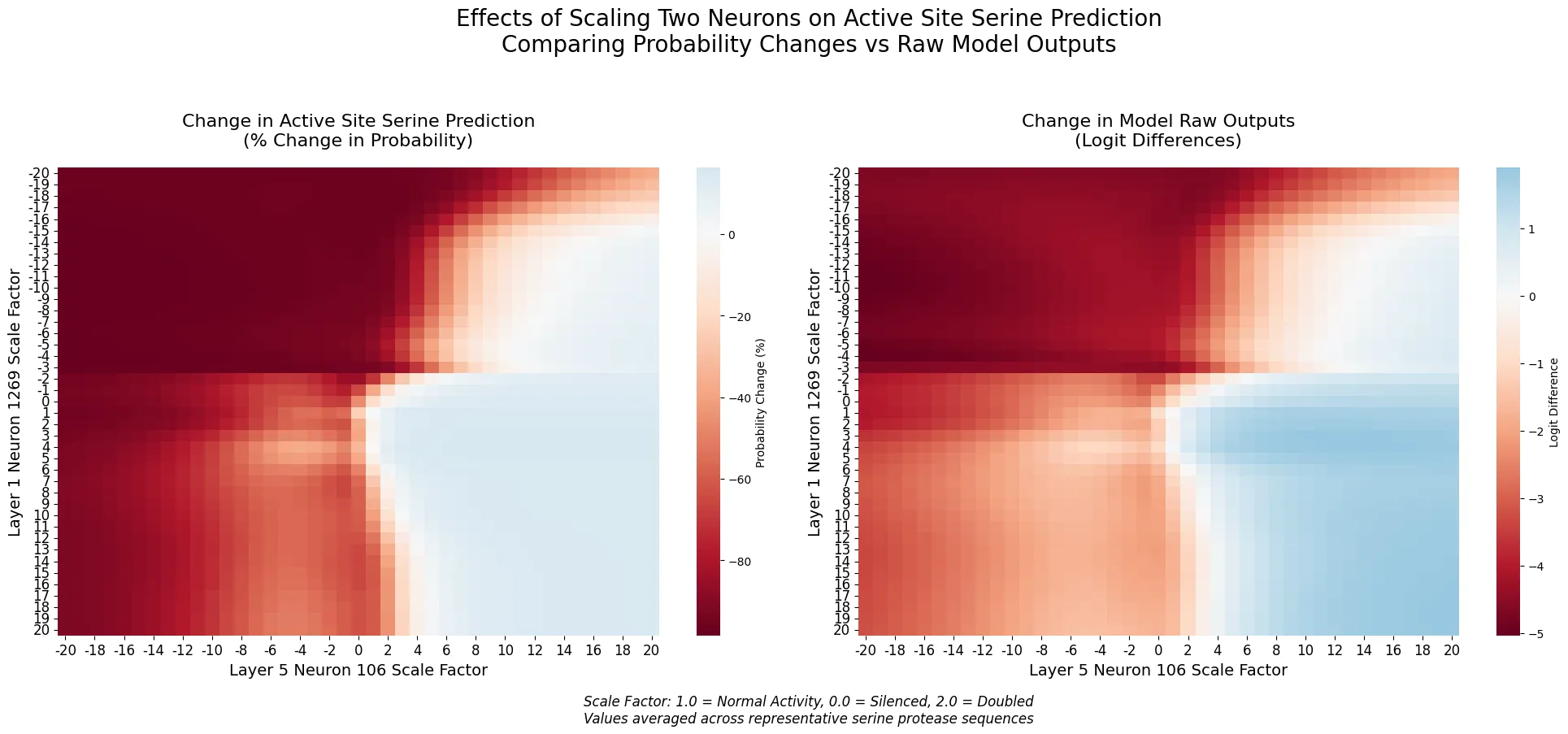

Neurons Encoding Catalytic Serines: A Case Study on Interpretability in Protein Models

We demonstrate how two neurons in a protein language model encode the catalytic machinery of serine protease, showing how interpretability techniques can transfer to biological domains.

Nithin Parsan

Co-Founder

We've Launched on Y Combinator!

After months of hard work and development, we're excited to share our vision for steerable and interpretable AI for protein engineering with the world.

Nithin Parsan

Co-Founder